The field of Information Theory is a fascinating lens through which to examine the world. It is applicable to a surprisingly broad range of topics and its implications reach into philosophy, psychology, communication, and even theology. Each subject area could produce a volume of material, but to explore any of them requires a foundation describing what information is and how to apply it. The most pragmatic place to begin is with the development of the modern scientific description of information.

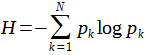

The beginning of the rigorous characterization of information began in 1948 when Claude Shannon published the paper, A Mathematical Theory of Communication. His focus was on the process of transmitting information via a communication channel, but his mathematical characterization is widely applicable. Among the concepts in the paper is a simple formula that quantifies information in terms of entropy,

where H is the entropy, N is the total number of possible outcomes of the process in question (aka the event), and pk is the probability of outcome k occurring. (Probability Theory Aside: The probability of an occurrence is a number between 0 and 1 that tells the ratio of times an event will have a particular outcome divided by the total number of trials of the event. For example, rolling a six-sided die is an event with 6 possible outcomes. A fair die has an equal probability for all outcomes: pk = 1/6 ≈ 0.167 = 16.7%. Shannon’s paper explains why the logarithm is in the formula, if you are curious.) For example, the event might be that your friend will send you a note with a letter of the English alphabet written on it. There are 26 possible outcomes of this event, and each outcome has a chance of 1/26 (pk ≈ 0.038), assuming your friend will write any random letter. The summation of the formula produces a quantity which is equal to the entropy of the event. The entropy essentially quantifies how much uncertainty exists in an event. In this case, the amount of information is 4.7 bits. The bit is the unit of measurement for information. It is defined by the simple event where there are only two possible outcomes which have equal probability, e.g. a coin flip ( heads or tails) or a binary computer digit (0 or 1). The amount of entropy in a binary digit is 1 bit, and the amount of information from other events can be measured in terms of bits. (For a bit the logarithm in the formula is base 2.) Because there are a lot more than two letters in the English language, there is more uncertainty about the outcome of choosing a random letter and therefore more entropy and potential for information transfer.

A subtle point to understand is that entropy and information are the same thing, but also opposites. Entropy is uncertainty. Information comes from the resolution of uncertainty. The formula measures the amount of uncertainty in an event (entropy), and information is the result when the uncertainty resolves. If I had a piece of paper with a letter written on it and dropped the paper into a campfire, I would lose 4.7 bits of information because the paper that identified one of 26 possible letters has been destroyed. The certainty about which letter was written is lost, and we are left in a state of uncertainty. This gives a sort of directionality to the flow of information. Because of thermodynamics, the concept of entropy carries notions of disorder and decay. Entropy here focuses on the related concept of uncertainty. The quantity of entropy in a system tells how much uncertainty is in that system, and information is the result of that uncertainty being removed.

The examples of random events, like a coin flip, producing information can be confusing. If I flip a coin and record the result, I am not getting any information other than the results of my experiment, which has very limited use. But imagine a scenario where two people have to communicate using some communication channel. Matt Damon’s character in The Martian comes to mind. (MINOR SPOILER ALERT)

The background for the scene above is that he is stranded on Mars and manages to recover and rehabilitate a rover that had been sent to the planet on a previous NASA mission. He enables the camera on the rover which sends its signal back to earth. He has a communication channel, but no obvious way to use it. NASA can only look at pictures and turn the camera 360 degrees. His first design for making the information channel function is to attach hexadecimal characters to sticks in the ground and have NASA point the camera at the various letters to communicate a message. Obviously an inefficient means of communication, but it is effective. It also illustrates the relationship between uncertainty and communication. Matt Damon’s character is completely uncertain about the symbols that will arrive, but it is not a random stream of characters. NASA is controlling what characters arrive. If one party controls the event and another party observes its outcomes, the controlling party can transmit information to the observing party. In this scenario, the outcomes are not random at all. They are determined by the person transmitting the information. The observer, who is unaware of what the transmitter is going to say, is the one with the uncertainty, and from his perspective the channel can output any random character with a particular probability of occurrence. As characters arrive, the uncertainty resolves, and the receiver acquires information.

Thinking about the process more deeply, one might notice that the results of the event are not always completely random even to the observer. This keen observation belies the fact that messages are often sent with more symbols or letters than actual bits of information. Returning to the case where a friend writes a letter on a piece of paper and hands it to you, assume now that the letter is one in a string of characters making up a message (written in the English language) that he wants to convey. Each letter that your friend gives you could be assumed randomly selected from words in the dictionary. In this case, the chances of getting any particular letter will not be evenly distributed. Instead, the letters will occur with the frequency indicated in the figure below.

You are much more likely to see an “E” than a “Q.” If you received the letters “INFO,” you might strongly suspect that the rest of the letters will be “RMATION,” although it could be the word “INFORM” or some other word starting with those letters. The amount of information packed into the English language is not at maximum capacity. A conceptual “perfect” language would have all of its characters used with equal frequency and uncorrelated with the characters around it. This type of language requires every letter of every word to be able to ascertain the meaning of a message. Although highly efficient, this makes a language fragile – prone to miscommunication through errors. Messages are often sent with redundancy, even between computers, in case one of the characters gets corrupted. With the added redundancy, the information in the message can be preserved even in the case of an imperfect communication device. A language with 26 letters can potentially convey 4.7 bits of information per letter, i.e.

but the English language with the distribution of letter frequencies shown above, conveys about 4.2 bits of information per letter. The English language is about 90% efficient in transmitting information via the letters available in it.

The analysis of letters could next give way to a fascinating analysis of the grouping of letters into symbols and words which have their own frequencies of use. However, that lengthy analysis is left to the interested reader with a recommendation of the book “An Introduction to Information Theory: Symbols, Signals and Noise” by John R. Pierce.

Application to Economics

While I plan to unpack the ramifications of information theory in various contexts and fields in separate articles, here is a short application of information theory to the field of Economics.

Economics is intimately intertwined with information. Milton Friedman astutely describes the critical function of prices in a free market as conduits for information. A price increase tells consumers that an item is currently in higher demand. It tells a supplier that they need to produce more. When the price is free to vary, it conveys this information accurately. Once production increases and supplies expand, the price drops. The supply, demand, and price all reach an equilibrium position if environmental conditions do not change, but in the practical world, conditions are always changing. Supply lines get hindered. Source material becomes more or less abundant. Items gain or lose popularity in culture. New inventions are introduced. The free market is capable of reacting to all of these inputs via information flow to keep the economy running efficiently. Information is the key to an efficient economy, and in a free market, prices are able to transmit information to all interested parties in a relatively efficient manner.

The price of an item is only a one-number metric that characterizes it, but it contains information derived from a countless number of inputs. In this way, the price is a sort of function accepting all of the relevant inputs and mapping it to a single number output that conveys the information to the interested parties. Innumerable variables feed into the price of something: supply costs, expected future market conditions, advertising effectiveness, branding, competitor prices, etc. All of these factors feed into the price and are able to sway its value. Because the price can change over time, it can convey information from a lot of variables in a compact, single number.

Combined with the total number of sales, a price is also capable of transmitting information in both directions, from the producer to the buyer and from the buyer to the producer. The price tells consumers how difficult it is to make a product. It incorporates all of the relevant inputs and gives the buyer a number. The effort and resources that go into building a truck, for example, are reflected in its price. The demand signal flows back to the supplier by how many items are purchased at each price. If an item is very difficult to make and nobody wants it, the price will convey that information to the producer. He will find that the price at which people will buy the item is below the amount of resources it takes to produce, and he will have to produce something else if he wants to stay in business. With all of the factors that a prices takes in as an input and with the two-directional nature of the information flow, the information that prices account for in a free market economy is startling to imagine.

To get an idea of how much information flows through price changes, consider Amazon as a product seller. Amazon sells around 385 million products. As a rough approximation to the behavior of a product’s price, assume that a price will take one of a set of 1000 discrete prices with a Gaussian distribution about its mean price according to the figure below,

Here, the mean price is taken to be $25 and the price fluctuates between about $20 and $30. (Note: there are 1000 discrete prices between $20 and $30 assuming the penny is the smallest denomination.) Obviously there are items with prices that differ greatly from this and the fluctuations might be much larger or much smaller than the distribution above. This is a gross simplification of the situation with the purpose of putting a rough number to the amount of information that the prices convey. If the prices are only allowed to change daily, then the amount of information that they can convey is 41,295 bits per second. That’s enough information flow to continuously transmit about 8 talk radio stations. A price is a single number, but when you consider all of the different items in the economy and the amount that their prices can vary, there is a river of information flowing through the prices in a free economy.

In a centrally controlled economy, information is divorced from prices, and the flow of information is greatly inhibited. Take, for example, rent fixing on housing. In a free economy, the price is allowed to vary in accordance with the demand upon each particular housing unit. At highly desirable living locations, rent soars. Someone sees the astronomical rent and says that it is not fair, in some sense of fairness. Their solution is to have the government coerce the market to control rent prices. This removes the correlation between the demand and the price for each housing unit, but it does not make the situation any more fair. In the free market, the highly desirable housing goes to the person who is willing to direct the most resources toward using it. In the controlled market, the residence of each housing unit is determined some other way – ways that are usually considered less fair than the free market. Someone will get one of the coveted housing locations because they have a close relationship with the decision maker (cronyism), or someone will decide who gets the housing based upon their own personal preference (despotism), or there will be a lottery (random chance), or someone will get the housing because of unsanctioned forms of payment (black market/bribery). Of all of these alternatives, random chance would probably be considered the most fair, but the fairness is subjective. A legitimate counter argument would say that the person who will pay more for the housing has a deeper desire to live there and will therefore be a better resident. Leaving the judgment of fairness aside, the only economy that actually conveys information about market conditions is the free economy. When a central government controls the price, they are certainly not accounting for all of the factors that go into the price function of a free economy. Sometimes they are set by only one factor: someone’s opinions about what seems fair. Price controls destroy the demand signal coming through the price. The loss of information has far-reaching consequences. For example, construction companies do not know how much housing to build because the price does not convey the demand. When government controls prices, situations like that in China result where construction companies build massive buildings that sit empty for years. The lost information results in massive amounts of waste in the economy.

Prices are not arbitrary numbers that sellers assign to things. They are the output of a highly complex function that takes into account innumerable sources of information. As the output changes over time, information flows through the price to the individuals involved in buying and selling the corresponding product. Information theory helps to describe that information, quantify it, and show quantitatively what is lost when prices are not free to vary and explain why markets without free prices are so inefficient.