The Myth of Artificial Intelligence

The sentient robot has been a fascination of the American Public for decades. Hollywood never seems to get tired of the plot line. From movies like Terminator and WarGames to the Matrix and I, Robot, films exploring the psychological questions of artificial intelligence and humanity or imagining how AI might cause a global apocalypse have been steady fare in the movie industry for at least my lifetime. Our culture has been both fascinated and terrified by the idea of machines gaining a consciousness of their own.

The drama seems to be coming to life these days as well. There is a steady cadence of news stories on the subject - people warning us about new AI developments, people updating us on where AI is today, people sounding the alarm that sentient AI is already here! People expect that sentient machines are coming, are inevitable, and might be already in our midst. But most of the chatter comes from the dramatic and imaginative side of the brain rather than the logical and rational side.

Society is busy trying to predict whether robots will be our friends or our enemies, but the assumption that AI, in the sense of sentient machines, is inevitable is flawed. It is true that machines can do some amazing and unpredictable things, that they can come up with solutions that humans would take eons to find. But thinking machines with a personality are simply an illusory projection of our humanity onto the non-human.

Francis Schaeffer, the historian, philosopher, art connoisseur, and theologian diagnosed the roots of modern man’s penchant for anthropomorphism in his 1976 work, How Should We Then Live?: The Rise and Decline of Western Thought and Culture. He traces how philosophers, in a craving for autonomy from God, developed a worldview that started from man and attempted to explain the material universe without the input of divine revelation. Although optimistic and proud early on in the days of the enlightenment, the philosophers over the decades found that their axioms lead to a worldview that is devoid of meaning, where man is nothing more than a molecular machine. Man has no reason to live, to strive, to learn, and he loses any basis for distinguishing himself from non-man.

The impacts of such a worldview upon society are far-reaching and unexpected. Schaeffer predicted many of the societal deformations that we see today, but nearly 50 years after his work, we see some additional manifestations of the widespread acceptance of the flawed humanist philosophy. One such symptom is the culture’s fascination with zombie movies. People today connect with the idea of separating humanity from the mass of population around them. While social media and a lack of authenticity are strong contributors to the deep disconnection between people and their neighbors, the widespread loss of the imago dei in our culture is a primary reason for the inclination toward the zombie narrative. We have discarded the belief that each person has dignity as a being made in the image of God and drifted into a bankrupt belief where humans are organic automatons. The degraded view of man promotes the notion that perhaps others are not humans like we are, that they are just sophisticated beasts. It is a small step from there to the idea of humanity as a mass of unthinking monsters who would be happy to destroy you, i.e. the zombie movie archetype.

A complementary side-effect of this humanist philosophy that discards man’s dignity is the elevation of non-man to the level of man. This comes out in a couple of ways: elevation of animals to human level, and the belief that the human can come from the non-human, i.e. that robots can become sentient. Animal rights activists have become almost mainstream today. In 1957, Americans could understand the story of Old Yeller: no matter how loved or admirable a pet dog is, there can come a time when the right thing to do is to put it down. Today’s movies are flippant about the loss or taking of human life, but the killing of an animal is anathema. Pet ownership has been on the rise in the US over the past 40 years as people see animal companionship as an acceptable, perhaps equivalent substitute for human relationships. The cultural hub of Hollywood puts animal rights activism on their list of virtues nearly wholesale. Animals, especially the ones that are compatible with our anthropomorphic impulses, have seen a general elevation in our society and culture in conjunction with the spread of humanistic philosophy.

Like animals, Artificial Intelligence is another receptacle of our misplaced anthropomorphic tendencies, except that it is more popular with that segment of society having a more scientific or science fiction bent. There seems to be a general uninformed belief that if people can imagine something, the scientific community will eventually find a way to make it real. Imagination has led to many marvelous inventions, but while we have our pocket computers today, we are still waiting on the flying cars, time machines, teleportation devices, and hover boards. Some ideas simply defy physics. They are logically inconsistent with the laws of the universe. Sentient robots, the way most of society imagines them, is one of those ideas.

One of the confounding contributors to the notion that robots can be human are scientists who tell people that it can be so. There is a concept in the scientific community today that if we create a machine that we do not ourselves understand then we have made progress. Scientists used to strive to advance our understanding, and more complicated machines required deeper and further understanding. However, the obvious conclusion is that if someone fully understands his creation, then it is just a complicated machine and nothing more. The scientists seem to have come to a variation upon another of Schaeffer’s themes: that modern man must make a leap into the irrational in order to find meaning in his flawed humanist worldview. The scientists seem to be clinging to the words of the British science fiction author, Arthur C. Clarke who said,

They know that the machine cannot contain the special quality, the magic of humanity, if they understand it. So, they want to create something that they do not understand and hope to find humanity in it somehow. If they cannot fully, rationally understand their creation, it must be an advancement.

Admittedly, there is room for scientific advancement using techniques like these, but the advancement is one step and then a dead end. In advanced physical chemistry, scientists have found it extremely difficult to accurately model complex molecular systems in a way that produces solutions consistent with reality. Some researchers have decided to attack the problem by working around it. They have devised experiments that use the molecules themselves to perform optimizations. It is sort of a simulation with real molecules in the loop. This technique allows the scientists to arrive at answers, but it does not necessarily improve their understanding. Sometimes having the answer helps the scientist loop back around and solve the problem. (It’s always easier to solve a math problem when you know the answer.) But otherwise, the process does not help them further down the scientific road. Science develops a theory and tests it then modifies or improves the theory and tests again. This step of putting a black box into the experiment is one last ditch effort to get a clue about how to improve your theory before having to admit defeat. It is not a way forward. The science behind artificial intelligence makes the same mistake.

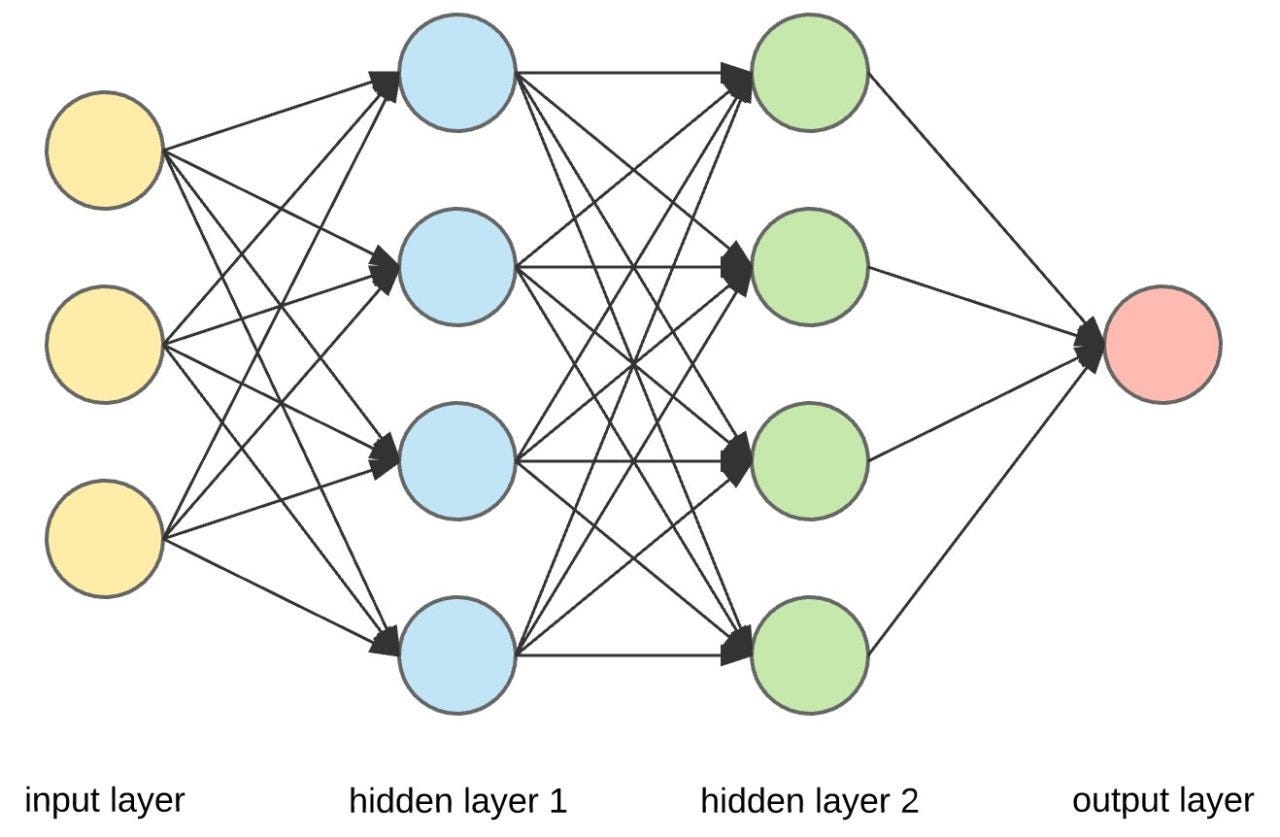

The primary algorithm upon which modern artificial intelligence is based is called a neural network. It is an algorithm that sounds sophisticated and advanced but is really just a way to hide what is actually going on and achieve advancement through obfuscation. The inventors did not begin with some esoteric mathematical basis for an algorithm. They started by trying to copy the human brain, reduced to its simplest possible conception. The algorithm is made up of a collection of nodes. These nodes do nothing but accept inputs and produce outputs. They are grouped together into “layers.” The top level layer of nodes is connected to whatever inputs the programmer wants to feed into their AI processor. The inputs can be connected to one, some, or all of the nodes. Subsequent layers in the algorithm have nodes where the inputs are the outputs of the previous layer. Again, the connections between nodes of different layers can have a variety of designs. After building up a satisfying number of layers, the programmer will “train” the AI. They will give it inputs and observe the outputs of the bottom layer. A node combines its inputs in some way to determine whether or not it is active, i.e. whether or not it will send a positive signal as its output. The nodes connected to the output of that node receive the inputs and do their own combination. The next layer produces outputs from the previous layer’s inputs and the signals cascade through the layers until they reach the output. The programmer’s training process attempts to get the AI to give the correct outputs for a given input by changing the linking between the nodes or how heavily each node weights each of its inputs.

Consider an example to make it more clear. Imagine you want to train your AI to recognize pictures of dogs. You collect thousands of pictures of dogs and you design your neural network to output either a “Yes” signal or a “No” signal. (We will keep the AI very simple for illustrative purposes.) Assume that each of the pictures is made up of 10 megapixels. That’s 10,000,000 potential inputs. Connecting each of these pixels to a node provides color values as inputs to the neural network. The training process jiggers the connections and responses of each node in the network until the output is a “Yes” for every picture of a dog and a “No” for every other picture. The programmer has no idea what exactly the neural network is doing. They do not have an algorithm description for the connections between the nodes. All they know is that they have trained it and that it does what they want it to do. They give a computer an abundance of degrees of freedom and find a way to store information in it in a cryptic and opaque way. If they collect enough input pictures to exhaustively cover the space of all possible input pictures, then any new picture that they feed into the AI will presumably give the correct output. Scientists have had a surprising level of success with such a chaotically designed system, and because of this success they believe that they are advancing science to new levels through this system of connections that they do not fully understand.

AI “learns” by being given a massive set of input data and being conditioned to respond to the input data in the appropriate way. It is conceivable that such a system could mimic human behavior. Imagine feeding every Twitter post and subsequent responses into a neural network as inputs and adjusting the network according to what responses are deemed good and what are bad. The algorithm could then respond to Twitter posts in a way that appears human. It could interact with humans in a human-ish way upon Twitter, but all it would really be doing is regurgitating an amalgamation of all the inputs from actual humans into a reasonably consistent output. There is no “thinking” as thinking is commonly understood. There is no creativity beyond the random combinations of the humans’ creative inputs. The AI might appear human in as much as humans put empty chatter onto Twitter, but it would not be human in any meaningful sense.

All AI algorithms are constrained to suffer the same flaw. The AI that composes music might seem novel and original, but it is just randomly combining musical components that have been composed by actual humans into a previously unrealized combination. It has been conditioned to produce only the outputs that in some sense “sound good” to the programmer, but it is not creatively producing music with any meaning behind it. If that passes for “sentient” or “human,” that’s just another example of the bankrupt humanist philosophy that sees humans as a mass of electrical connections attached to an organic body. If you reduce humanity to a slew of electrical signals in the brain and then reduce the brain to a collection of nodes randomly connected together producing outputs from inputs, then AI can become human, but you also have a malnourished concept of humanity. To say that a robot can be sentient is just another aspect of the pessimistic, degraded conclusions of the bankrupt humanist philosophy issuing from the attempt to make mankind autonomous and separate from God.

The Christian perspective understands mankind differently. It understands that humans are both body and soul. It understands that God made man as an entirely distinct creature when He said,

Let us make man in our image, after our likeness. And let them have dominion over the fish of the sea and over the birds of the heavens and over the livestock and over all the earth and over every creeping thing that creeps on the earth.

The Christian worldview believes that reality consists of more than the physical universe. It acknowledges that man is small and feeble like God’s other creatures, but then says,

Yet you have made him a little lower than the heavenly beings

and crowned him with glory and honor.

Schaeffer recognizes that the concept of the dignity of man is a product of Western thought and culture developed upon a base of widespread societal belief and adherence to Christian principles. The loss of this base via the migration of a majority of society to philosophical tenets consistent with an atheistic humanist philosophy has resulted in the demotion of mankind and the degradation of his dignity. It has resulted in a society that increasingly discards the belief that life is precious, discards the belief that mankind is unique and distinct from the animals, and accepts the premise that an algorithm producing outputs from inputs can achieve equivalence with humans on some level.

Artificial Intelligence certainly deserves serious thought and reflection. Computers and machines can do things that a programmer does not expect. Giving an AI algorithm too much authority and power to effect changes can be dangerous, as some of the autonomous driving accidents have shown us. IBM and others have shown that Artificial Intelligence can mimic human behavior in a very realistic way, and the degree to which AI is allowed to replace genuine human interactions in society is another topic requiring serious thought and debate. But the dreams of computers waking up and either joining society as another species of creature or deciding to exterminate and replace humans are destined to remain confined to the realm of novels, movies, and imagination.