In a previous article, I have described the founding and development of information theory as a field of study. It is a method for quantifying information with the initial application being the field of communication. The concepts developed there apply to many other areas including the physical world at a fundamental level. While it is natural to think about the physical world as a medium for storage and transmission of information content – words on paper, patterns in radio signals, vibration of sound waves in verbal communication, etc. – it can be shown that the physical world actually contains information inherently. Any physical system has a quantity of information as a physical characteristic.

Qualitative indicators of information are generally apparent in the physical world. We intuitively know that there is information in the world when we operate under the assumption that its details can indicate its past and how it got to be in the state that we find it. Any kind of order or structure that is not “natural” belies human activity. Square patterns on the ground might indicate the remains of a past civilization. Ordered etchings on a paper signify written communication rather than accidental random markings. Many state and federal parks have outlawed the stacking of stones because it pollutes the pure natural beauty with the unmistakable mark of human activity. The fingerprint of human activity is information built into the physical world.

Human activity adds information to the physical world. Natural phenomena can leave behind information as well. Scientists find clues about the physical world by collecting the bits of information left in the earth. Deviations from homogeneity teach us things. Why do the trees stop suddenly upon the acclivities of a tall mountain? Why are there layers of color in the Grand Canyon rather than one solid color? We see that structure, variety, and patterns are stores of information.

But these are only symptoms of a much deeper relationship between information and the physical world. To understand the root of it, we venture into the realm of the hard sciences, to thermodynamics and especially statistical thermodynamics. The key concepts in both information theory and thermodynamics are a thing called entropy. Information theory equates entropy and information. Entropy is uncertainty and information is the resolution of that uncertainty. In thermodynamics, entropy is defined in terms of the seemingly unrelated parameters of volume, pressure, energy, temperature, and the number of molecules in a system. However, the deeply insightful work of the pioneers of statistical thermodynamics ties the two concepts together.

With some minor assumptions about large numbers of molecules, statistical thermodynamics has worked out a formulation for the probability that a particular system will be in a particular state. The system has to be defined by the basic parameters of thermodynamics, i.e. the volume, temperature, and number of molecules in the system. Surprisingly, such a scant amount of information is enough to define the probability of state j,

The denominator of this formula is a quantity that is extremely useful in statistical mechanics and has its own name and variable. It is called the partition function and is defined,

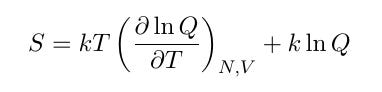

This partition function is involved in the formulas that link statistical mechanics to the classical quantities of thermodynamics, one of which is entropy. The formula for the entropy of a physical system is,

which probably looks a little scary. Amazingly, this formula can be rewritten into a simple, familiar form. Working out the derivative and combining the terms leads to the intermediate formula,

Then condensing this formula back down by putting terms together into the form of the state probability equation defined above gives the result,

The physical entropy of a system is proportional to the information entropy of a communication channel1. The proportionality constant is effectively just a change of units from the physical realm to the information realm. This formula means that physical entropy is equivalent to information. Any physical system can be thought of as having a quantity of information built into it. There is a direct link between a system’s form and an inherent amount of information infused within it.

The term “system” is so general that it does not always convey concrete ideas of the vast scope that it covers. A system can be any isolated group of matter. A glass of water is a system. A human cell is a system. A car is a system. A tree is a system. The planet is a system. Any of these systems has an entropy level that equivalently represents a quantity of information.

Statistical mechanics does not actually care about the absolute level of entropy in a system. What is important is the change in entropy when a system undergoes a transformation, or a reaction, or some other kind of change. Information is the same way. As described in Information and Life: Part I, information only comes from a change in uncertainty. Before a listener receives a signal, there is an amount of uncertainty about what the symbols in the message will be. Once the message arrives, the uncertainty is eliminated. Entropy goes from some level, H, to zero and acquired information goes from zero to H. When a physical system goes through a transition from one state to another, there is a change in entropy. State one has an entropy level, S1, state two has an entropy level, S2, and the change of going from one state to the other is ΔS = S2 - S1. If ΔS is positive, then entropy has increased in going from the first state to the second and its inherent information level has decreased. If ΔS is negative, entropy has decreased and the internal information of the system has increased.

Here, thermodynamics contributes its most philosophically significant finding: the famed Second Law of Thermodynamics. This law states that any reaction that will spontaneously occur will have a positive change in entropy. For a reaction to occur, entropy must increase. It should be noted that this law holds for a closed system. For a particular component of a system, the entropy can decrease, but in the system as a whole, entropy must increase. The meaning of this law and its philosophical implications have been debated for decades, and while it does not say anything materially different, couching the point in terms of information rather than entropy is more elucidating.

Scientific analysis of natural reactions and processes has both discovered and confirmed the second law of thermodynamics. The general implication of the law is that the world and everything in it is falling apart. It is a rather pessimistic conclusion, and many people will not accept it. They will point to life renewing and building up rather than falling apart. And it is true that plants and animals can decrease their entropy. They consume food, air, and sunlight and drive down their internal entropy. This is the point of the “closed system” aspect of the law. While doing things to lower their own entropy, living systems increase the entropy around them. Non-living systems can have negative changes in entropy too, but in all cases, the system is an isolated sub-component of a larger system. The larger system will increase in entropy. Thankfully, the earth has a nearly infinite source of energy in the form of the sun. The earth can absorb the energy and drive down its entropy level by casting entropy-increasing radiation out into space. The earth as a sub-component of the universe can temporarily decrease its entropy while the universe as a whole increases in entropy.

In terms of information, the second law of thermodynamics has a slightly different connotation. It states that information is lost in every spontaneous reaction or process2. Information is leaking out of the universe. The sun provides an effectively infinite well of information that the earth can absorb and restore the information that it loses through spontaneous reactions. So while the earth can forestall the second law for some 5 billion years, the universe as a whole is losing information. The idea of a universe with monotonically increasing entropy obscures the fact that there is a ceiling. The information perspective makes the situation more clear by converting the ceiling to a floor. There is no such thing as negative information. As information leaks out of the universe, it approaches a state of zero. If information is always decreasing, it cannot continue indefinitely. If the energy in the universe were to reach a state where it was homogeneously distributed across space, then the universe would again become “formless and void,” bereft of information along with any structure or character. This thought experiment begs the question, “If information is leaking out of the universe and dripping away to nothing, where did all of this information come from to begin with?” This question is one of the pillars of the next part in the information series, Information and Life: Part 3 – Information and Theology.

The physical world is imbued with information. Physicists have been characterizing systems and reactions by entropy and changes in entropy for nearly two centuries. Statistical mechanics and Information Theory show us that those scientists have equivalently been studying information and changes in the level of information between system states. Owing to its relatively recent development and its restriction largely to the field of communication, the information perspective likely has much left to add to fields like thermodynamics and how we understand its conclusions.

The astute reader might have noticed that the entropy formula in Part 1 was a logarithm base 2 while the formula here uses a natural logarithm. The base of the logarithm is changeable in Information Theory. A. I. Khinchin’s Mathematical Foundations of Information Theory says, “the logarithms are taken to an arbitrary but fixed base,” meaning the bases can be changed as long as quantities calculated with different bases are not mixed.

To clarify any confusion on the language of a “spontaneous” reaction, every reaction that occurs happens spontaneously. There is no sense in which some reactions can happen spontaneously and others can be coerced into happening. The only way people can cause a reaction to occur is to create the conditions that cause it to happen spontaneously.